Why DCP Compute Credits Exist

Traditional cloud providers bill for availability—you rent virtual machines by the hour (e.g. a c6g.xlarge or g4dn.xlarge instance) with specific CPU/GPU specs, region, tenancy, and operating system.

The problem?

- cloud users pay for time, not output;

- costs can be high, unpredictable, and complex;

- over 200 instance types make choosing “the right combination of machines” a nightmare for regular developers and researchers, requiring specialized teams; and

- DevOps, FinOps, and cloud system administration overhead adds both direct and indirect costs.

DCP eliminates all of this complexity and overhead. You don’t rent instances; you simply pay for the results your job produces.

Pricing and Exchange Rates

As of January 2026, one Compute Credit, 1.000 ⊇, can be bought or sold for USD $0.0003171 (≈ CAD $0.0004450 at 1.4034 CAD/USD). Because DCP prices are currently denominated in USD, the CAD value of credits will fluctuate with exchange rates. While we may decouple from USD in the future, the current peg allows DCP to operate in the global compute market.

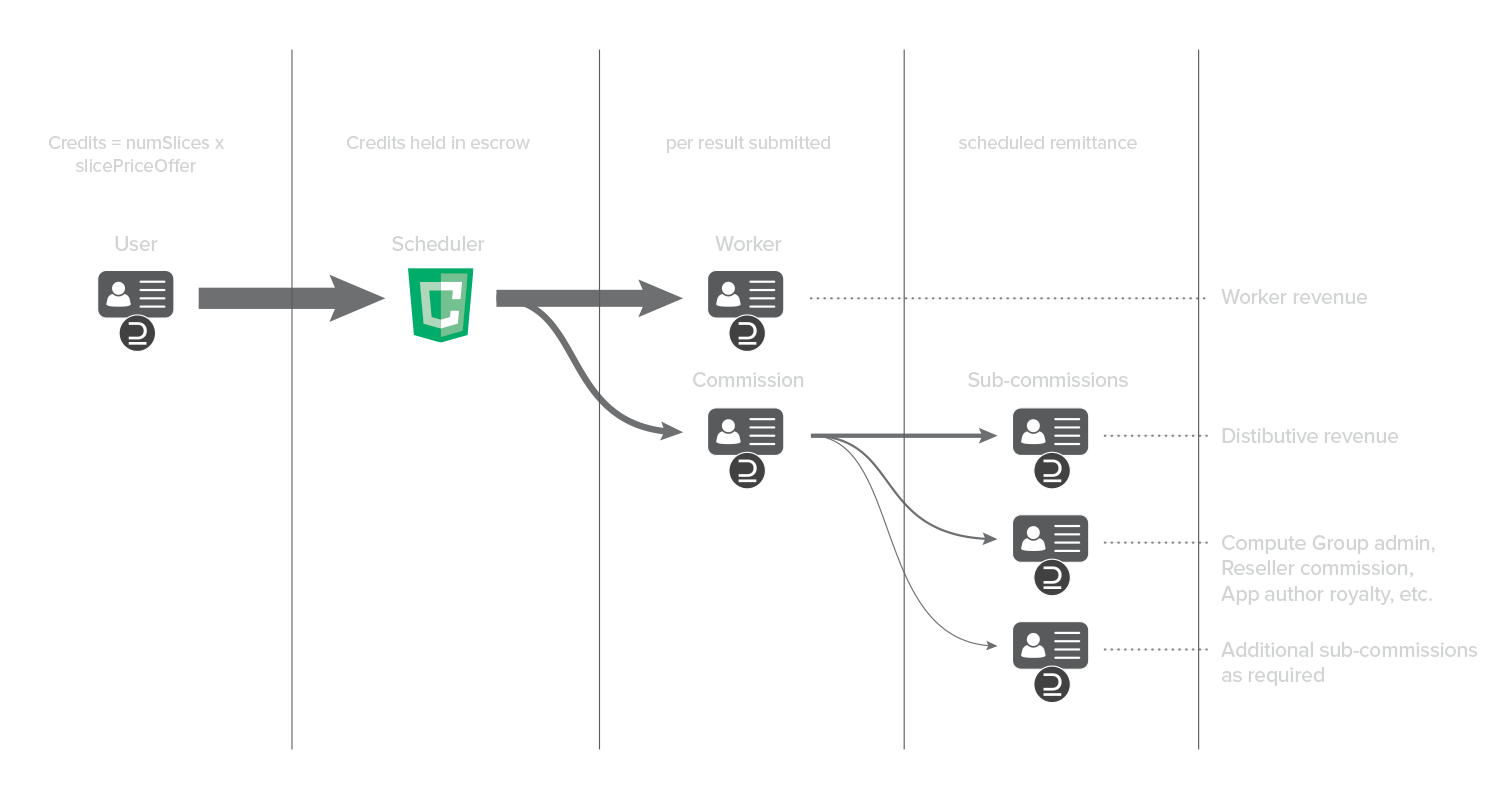

Compute Credit Flow on DCP

Compute Credits flow from a user deploying a Job (spend Compute Credits) to the Worker nodes executing Job slices (earn Compute Credits), with coordination by the DCP Scheduler and a percentage commission retained by the Scheduler (Scheduler Commission). Worker payments and Scheduler Commission are transacted on each result.

The Scheduler Commission is configurable on a per–Compute Group basis and may be subdivided into an arbitrary number of sub-commissions to support fees or royalties for multiple beneficiaries, including but not limited to:

- Scheduler operator

- Compute Group administrator

- Application author / data provider / AI model provider

- Network provider

- Reseller or distributor

- Charitable donation or scientific computing grants

- etc.

The subdivision of the Scheduler Commission into sub-commission accounts is not transacted on each result. Instead, sub-commissions are remitted as recurring, scheduled batch transactions (e.g., daily, weekly, monthly, or as required).

Figure 1: Compute Credit flow when deploying a Job on DCP

Buying and Selling Compute Credits

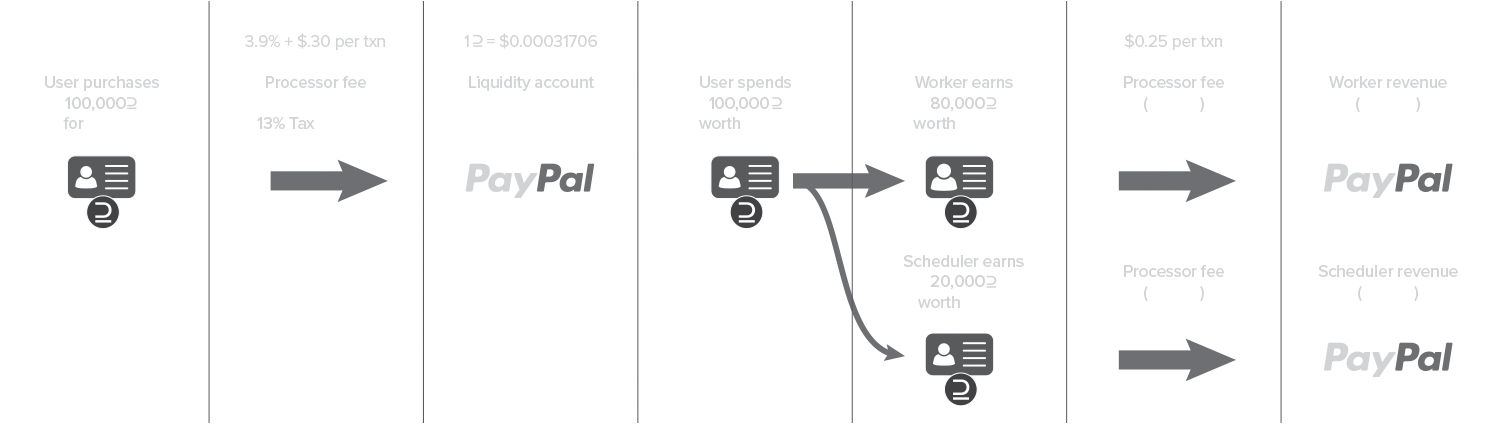

Compute Buyers buy Compute Credits in order to deploy Jobs. Compute Sellers sell Compute Credits earned by the Worker nodes that they control. Compute Credits can be purchased by credit card via the DCP Portal (dcp.cloud). The DCP Portal uses PayPal Braintree for payins. Then Jobs can be deployed resulting in Compute Credit disbursement to Workers minus the Scheduler Commission and related sub-commissions. Those account owners can sell their Compute Credits at any time (suggest accumulating them for a while before selling because of the $0.25 PayPal Payouts fee) via the DCP Portal by supplying a PayPal account.

For large Compute Credit purchases, the purchase can be done manually in coordination with Distributive in order to save the 3.9% PayPal Braintree fee.

Here is an example lifecycle of 100,000 compute credits, with HST applied and a 20% Scheduler Commission.

Figure 2: Buy → Deploy → Work → Sell : Payment and Compute Credit Flow with DCP

How Job Pricing Works

When a user deploys a job on DCP, the platform dynamically measures the computational characteristics of each Job slice and tracks an exponential moving average of them in the Slice Characteristics Vector:

[ reference-CPU-hours, reference-GPU-hours, input-data-gigabytes, output-data-gigabytes ]

DCP Job slices are priced based on these factors, not the underlying hardware used.

Because hardware performance varies widely, DCP benchmarks each participating machine against a reference standard derived from the overall network performance. This allows computational effort to be expressed in reference CPU-seconds and reference GPU-seconds, making job pricing consistent regardless of the underlying hardware.

That means:

- slower devices still earn the same per-result payout as faster ones;

- faster machines simply complete more results per hour; and

- users pay the same price per unit of computation, regardless of what hardware runs it.

This creates a simple pay-per-result model, eliminating the need for complicated instance selection, right-sizing, and burdensome devops and sysadmin overhead.

The MarketRate Algorithm

DCP continuously updates the cost of computation using a proprietary Market Rate Algorithm.

This algorithm maintains a Market Rate Vector, a four-element vector representing current pricing in Compute Credits:

[ price per reference-CPU-hour, price per reference-GPU-hour, price per input-data-gigabyte, price per output-data-gigabyte ]

When a user deploys a job on DCP by calling job.exec(), the following process occurs:

- Estimation Phase – The DCP Scheduler releases three of the job’s slices to DCP Workers in estimation mode.

- Benchmarking – As soon as the first result returns, the scheduler uses that slice’s initial Slice Characteristics Vector and performs a dot product with the current Market Rate Vector to calculate the initial Market Value for each slice.

- Execution Phase – The job then transitions to running mode, the remaining slices are released for execution, and as results continue to return, the Slice Characteristics Vector is continuously updated using an exponential moving average for each element.

- Dynamic Bidding – Users can choose to run their job at the current Market Value, or specify a Slice Price in job.exec(price) that is higher or lower than Market Value.

- Offering a higher Slice Price attracts more workers and delivers more results concurrently.

- Offering a lower Slice Price reduces cost but may slow job completion.

Increasing the offered Slice Price raises a job’s network priority, similar to a rush order.

In any case, the job will never cost more than the number of slices multiplied by the Slice Price—unlike commercial cloud where you can easily forget to shut down your running instances and wake up to a fifty thousand dollar bill.

Dynamic Priority and Slice Pricing

If parts of a job become more complex (requiring more CPU/GPU time), its payout rate may fall relative to other jobs.

When this happens:

- the job’s network priority decreases automatically; and

- (soon to be implemented) the user receives an alert and can raise their offered Slice Price through the Jobs Dashboard to maintain performance, if desired.

Worker Minimum Wages

DCP Workers (the compute providers on DCP) will soon be able to define their own Minimum Wage Vector, specifying the lowest acceptable payout rate per resource type (same dimensions and units as the Market Rate Vector). The dot product of the Minimum Wage Vector with the Slice Characteristics Vector is the minimum Slice Price that a worker will be willing to execute the slice for. If a job's Slice Price pays below that threshold, the worker simply won’t accept it.

Setting this threshold too high may cause the worker to remain idle; setting it too low may result in under-compensation. Under- or even non-compensation may be acceptable in voluntary computing contexts (e.g., scientific collaborations like SETI@home).

Visualizing the DCP Compute Economy

Distributive is developing visual tools that let users and workers see:

- the current distribution of job payouts;

- relative placement of their own offers and wages; and

- market trends in compute pricing.

These visualizations will help participants make informed decisions about pricing, bidding, and resource contribution.

In Summary

Compute Credits simplify distributed computing economics. They replace traditional cloud billing complexity with a transparent, dynamic, and equitable pay-per-result model that rewards compute contribution and optimizes for what truly matters—computational results.